- #Pip install jupyter notebook while running how to#

- #Pip install jupyter notebook while running code#

- #Pip install jupyter notebook while running windows 7#

- #Pip install jupyter notebook while running download#

#Pip install jupyter notebook while running windows 7#

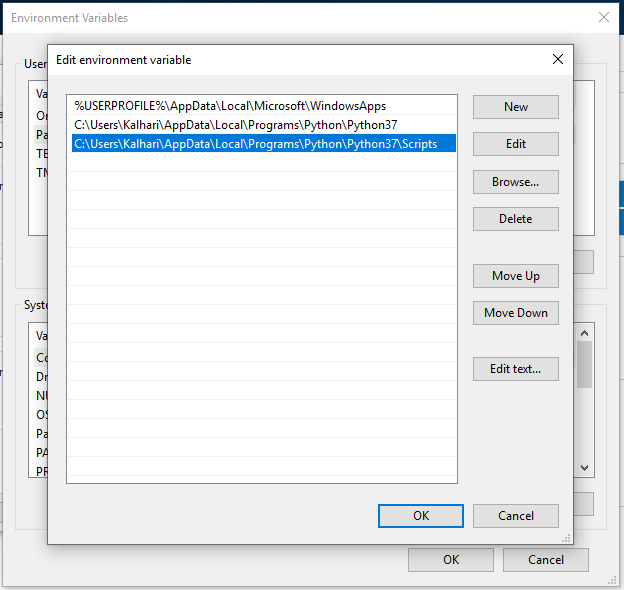

In Windows 7 you need to separate the values in Path with a semicolon between the values. In the same environment variable settings window, look for the Path or PATH variable, click edit and add D:\spark\spark-2.2.1-bin-hadoop2.7\bin to it. The variables to add are, in my example, Name

You can find the environment variable settings by putting “environ…” in the search box.

For example, D:\spark\spark-2.2.1-bin-hadoop2.7\bin\winutils.exeĪdd environment variables: the environment variables let Windows find where the files are when we start the PySpark kernel. Move the winutils.exe downloaded from step A3 to the \bin folder of Spark distribution. For example, I unpacked with 7zip from step A6 and put mine under D:\spark\spark-2.2.1-bin-hadoop2.7 tgz file from Spark distribution in item 1 by right-clicking on the file icon and select 7-zip > Extract Here.Īfter getting all the items in section A, let’s set up PySpark.

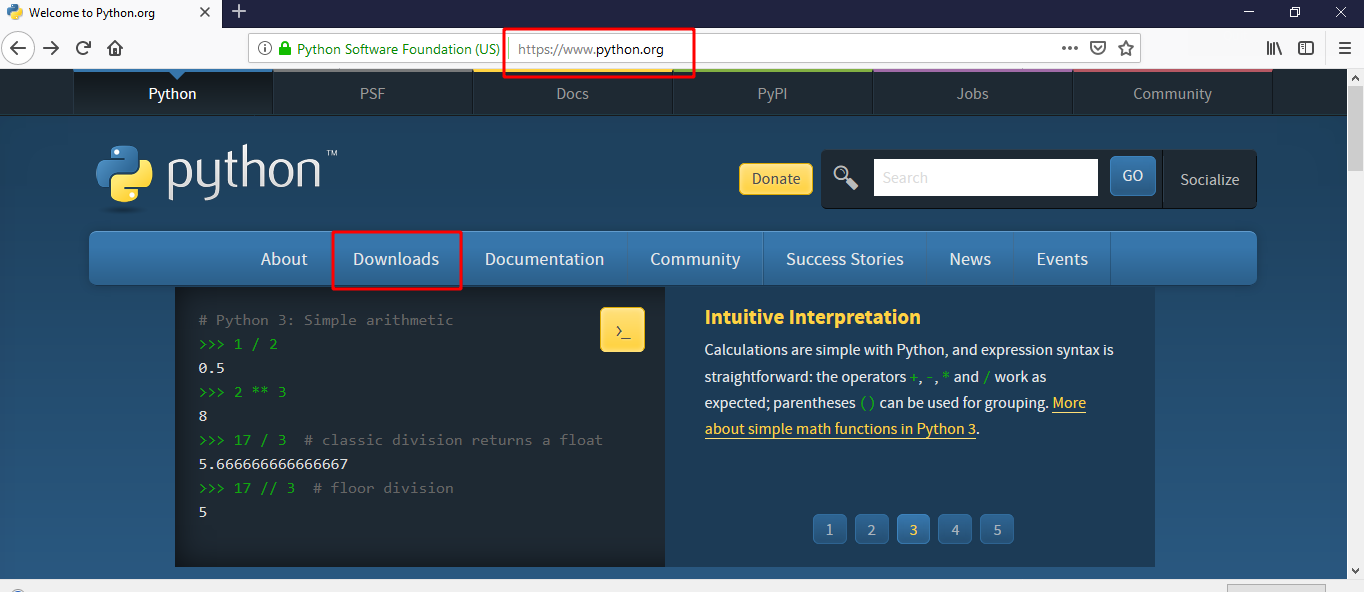

#Pip install jupyter notebook while running download#

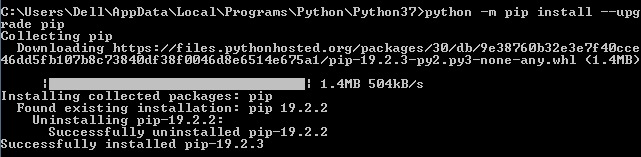

tgz file on Windows, you can download and install 7-zip on Windows to unpack the. I recommend getting the latest JDK (current version 9.0.1). If you don’t have Java or your Java version is 7.x or less, download and install Java from Oracle. You can find command prompt by searching cmd in the search box. The findspark Python module, which can be installed by running python -m pip install findspark either in Windows command prompt or Git bash if Python is installed in item 2. Go to the corresponding Hadoop version in the Spark distribution and find winutils.exe under /bin. Winutils.exe - a Hadoop binary for Windows - from Steve Loughran’s GitHub repo. You can get both by installing the Python 3.x version of Anaconda distribution. I’ve tested this guide on a dozen Windows 7 and 10 PCs in different languages.

#Pip install jupyter notebook while running how to#

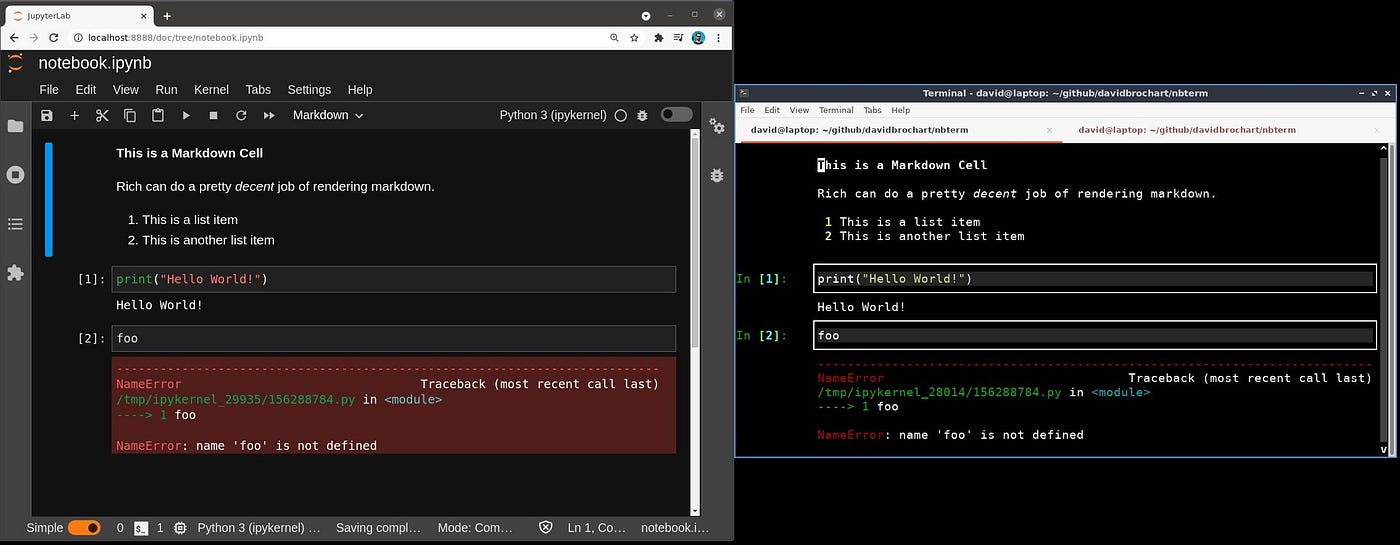

In this post, I will show you how to install and run PySpark locally in Jupyter Notebook on Windows.

#Pip install jupyter notebook while running code#

ERROR: Command errored out with exit status 1:Ĭommand: 'c:\users\himakernani\appdata\local\programs\python\python39\python.exe' 'c:\users\himakernani\appdata\local\programs\python\python39\lib\site-packages\pip_vendor\pep517_in_process.py' build_wheel 'C:\Users\HIMAKE~1\AppData\Local\Temp\tmp8ldhmne8'Ĭwd: C:\Users\HIMAKERNANI\AppData\Local\Temp\pip-install-m2s59uta\argon2-cffiĬopying src\argon2\exceptions.py -> build\lib.win-amd64-3.9\argon2Ĭopying src\argon2\low_level.py -> build\lib.win-amd64-3.9\argon2Ĭopying src\argon2_ffi_build.py -> build\lib.win-amd64-3.9\argon2Ĭopying src\argon2_legacy.py -> build\lib.win-amd64-3.9\argon2Ĭopying src\argon2_password_hasher.py -> build\lib.win-amd64-3.9\argon2Ĭopying src\argon2_utils.py -> build\lib.win-amd64-3.9\argon2Ĭopying src\argon2_ init_.py -> build\lib.win-amd64-3.9\argon2Ĭopying src\argon2_ main_.py -> build\lib.win-amd64-3.9\argon2Įrror: Microsoft Visual C++ 14.0 or greater is required.When I write PySpark code, I use Jupyter notebook to test my code before submitting a job on the cluster. When I try to install jupyter through pip I am getting below shown error. Raise ValueError("Expected "+item_name+" in",line,"at",line) Line, p, specs = scan_list(VERSION,LINE_END,line,p,(1,2),"version spec")įile "/usr/lib/python2.7/dist-packages/pkg_resources.py", line 2573, in scan_list Parsed = next(parse_requirements(distvers))įile "/usr/lib/python2.7/dist-packages/pkg_resources.py", line 45, inįile "/usr/lib/python2.7/dist-packages/pkg_resources.py", line 2605, in parse_requirements Self._dep_map = self._compute_dependencies()įile "/usr/lib/python2.7/dist-packages/pkg_resources.py", line 2508, in _compute_dependencies Requirement_set.prepare_files(finder, force_root_egg_info=self.bundle, bundle=self.bundle)įile "/usr/lib/python2.7/dist-packages/pip/req.py", line 1266, in prepare_filesįile "/usr/lib/python2.7/dist-packages/pkg_resources.py", line 2291, in requiresįile "/usr/lib/python2.7/dist-packages/pkg_resources.py", line 2484, in _dep_map

Downloading jupyter-1.0.0-py2.p圓-none-any.whlĭownloading/unpacking ipywidgets (from jupyter)ĭownloading ipywidgets-6.0.0-py2.p圓-none-any.whl (46kB): 46kB downloadedįile "/usr/lib/python2.7/dist-packages/pip/basecommand.py", line 122, in mainįile "/usr/lib/python2.7/dist-packages/pip/commands/install.py", line 278, in run